September 10, 2013

The first in a series of articles about using virtual prototyping techniques to achieve more effective debug.

September 6, 2013

If you're going to be working on any aspect of multicore embedded system design, a newly published book titled "Real World Multicore Embedded Systems" will be an excellent guide.

September 3, 2013

This article looks at the way in which various representations of a block of a design have different implications in a UPF based power-aware hierarchical design flow.

August 25, 2013

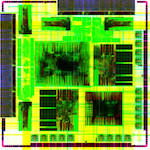

What ARM learnt when it ran a Mali GPU-based test chip through a Synopsys tool flow onto a TSMC 20nm process

August 12, 2013

3D-IC design is ready for take-off, following several years of intense collaboration to develop the necessary tools, methodologies and flows

July 31, 2013

How the company migrated to an OVM-based methodology to design and verify a 30 million-gate ASIC design, on the path to UVM.

July 25, 2013

Formal verification techniques are becoming more widely used as the size and complexity of SoCs and increases.

July 19, 2013

How Cisco eliminated iterations in the ASIC handoff of a gigahertz networking chip by using physically aware synthesis

July 3, 2013

Clock domain crossing bugs undermine the productivity gains of moving to block-based design, but can be tackled through hierarchical formal analysis.

May 23, 2013

The growing verification challenge, and how to address it by coordinating multiple debug strategies.