FinFET variability issues challenge advantages of new process

Tool and process vendors are having to work hard to ensure that designers can overcome finFET variability issues to extract the full benefits of the new processes, according to speakers at a recent SNUG meeting in Santa Clara.

FinFET advantages

Tom Quan, director of IP platform marketing, TSMC, opened the panel by outlining the promise of finFET processes, and the challenge to foundries in making that promise available to users through certified tool flows.

He pointing out that finFETs’ superior IDSat performance compared to planar equivalents means higher intrinsic gain – and therefore faster devices for the same power, or lower power for the same speed. The devices also offer better matching behaviour than planar equivalents (useful in AMS designs), and exhibit the all-important low leakage, for reducing off-state currents and enabling lower operating voltages.

Quan argued that among the key challenges to the use of finFETs include the quantised width of the fin, leading to the need to place finFETs on gridded layouts using specially certified placers.

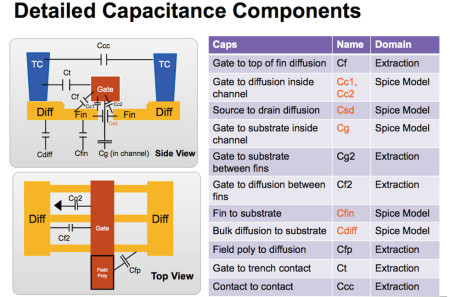

The 3D nature of the fins and surrounding middle end of line (MEoL) structures also demands accurate resistance and capacitance modelling, and therefore certified RC extractors. The finFET’s increased gate capacitance vs planar also demands more accurate static timing analysis (STA), while its increased Miller effect impacts reliability and therefore drives a need for stronger power-integrity analysis and timing accuracy.

Figure 1 A recent view of how parasitics are accounted for in fInFET processes (source: Synopsys)

TSMC is using a design with an ARM Cortex A15 core and various PLLs as a vehicle to help develop its process and certify two flows, one for ASIC/SoC and one for custom, looking to check for issues such as correlation between tools, IP integration and overall tool capacity and user friendliness.

Among the key concerns are certifying extractors to handle the multiple fringing capacitances that exists around the fin and the coupling capacitances in the MEoL.

TSMC is also now looking for much closer correlation between static timing analysis (STA) and Spice. Quan said TSMC is using TMI, the TSMC modelling interface, to capture all the ‘extra’ parasitics around the fin (vs planar) “to make sure that everything gets counted and nothing gets double-counted.”

He is also concerned that STA remains accurate at low operating voltages, and that designers recognise the importance of undertaking electromagnetic reliability and power-integrity checks at this more challenging node.

The good news is that TSMC is on the point of releasing version 1.0 of its ASIC/SoC design flow for its 16FF process, which will certify Synopsys’ StarRC and Primetime tools for 16FF Spice and DRM routing compliance.

FinFET modeling

Susan Wu, director of silicon technology at Xilinx, told the panel that Xilinx delivered its first 20nm silicon last year and is now designing in 16nm finFET processes.

Wu echoed Quan’s views of some of the challenges: the need to make it easy to work with multi-fin transistors throughout the design flow, from schematic entry to gridded layout, and in simulation.

She said that the major challenge of using finFETs is in modeling and extracting the extra R and C parasitics accurately enough to avoid their mischaracterisation degrading the circuit performance, and quickly enough to keep up with current time-to-market pressures.

“The requirement is to provide simulation accuracy and at the same time to handle the simulation efficiently,” she said. “Everything comes with a cost.”

Xilinx is working closely with TSMC using its own benchmark circuits to assess potential circuit performance, and also to influence model features and accuracy. Emphasising the nature of the challenge, Xinlinx has been using Synopsys’ QuickCap 3D field solver as its golden reference with which to qualify the StarRC extraction tool – as well as validating the results against silicon.

Wu said Xilinx had been achieving very good accuracy for the tool flow using ring oscillator test circuits, with a worst-case mismatch between StarRC and QuickCap of 2.3% in one set of test cases.

Standard cell, core and memory IP with finFETs

Brian Cline, staff design engineer at ARM, laid out what the IP licensor had learnt so far about working with first-generation finFET processes, for standard-cell, core and memory IP designs.

Standard cell

Cline echoed Wu’s point about the practicalities of the design process when he argued that the key tradeoff for finFET design is between accuracy and runtime.

He said that finFETs suffer more PVT variation than planar processes, as well as more wire-alignment variation due to LELE lithographic strategies, and greater amounts of line- and fin-edge roughness due to process variability. Designers also need to model how waveforms are distorted as they cross the chip, as well as the impact of noise and increased Miller effects.

To reduce the uncertainty that all this variability causes, which could lead to excessive guardbanding that would absorb the advantages of finFETs, standard-cell designers will have to make greater use of 3D field solvers to capture RC parasitics accurately. They’ll also have to apply one of the on-chip variability (OCV) strategies that are now emerging, as well as more sophisticated waveform analysis.

Memory IP

The constraints for memory IP design are as before, but there’s a greater sensitivity to the runtime impact of these more sophisticated analysis strategies, because of the size of the memory arrays designers work with. These large arrays also mean that interconnect resistance is becoming more important, and so IR drop and power-grid design becomes more critical.

Memory designers will also find their design flexibility reduced, because of the quantisation of fin widths. And there are other subtleties to watch out for, such as the fact that the minimum voltage of peripheral circuits does not scale in the same way as that of the bit cells’ – leading to a requirement for assist circuits.

Core IP

For core IP design, Cline said that the way to cut design uncertainty was to reduce Hold uncertainty. He recommended prioritising some design corners, as a way to trade off accuracy and increasing runtime. And he argued that the waveform modeling that some designers started using at 28nm is a requirement at 20nm and below, to account for distortion and increasing back-Miller effects.

Looking ahead to second-generation finFET processes, Cline pointed out that “technology choices impact the entire ecosystem.”

Tackling finFET variability

Glen McDonnell, associate technical director, Broadcom, also brought up the tradeoff between tool runtime, design margin and over-design – and what Broadcom is doing about it.

He argued that designers were seeing greater random variation from one cell to the next on advanced processes, and so needed a more sophisticated OCV flow that can deal accurately with the way that path delay depends on path depth.

Using a graph-based analysis approach can lead to excessive timing pessimism on critical paths, and although a shift to the more accurate path-based approach reduces this pessimism it is costly in terms of runtime.

Broadcom’s solution is to move to a parametric OCV approach, in which the STA tool propagate the mean and the standard deviation of delay for each cell separately, instead of just using a worst-case delay.

This gives a path-based analysis accuracy with graph-based algorithm runtime, as well as fewer failures and fewer paths to recalculate. McDonnell also argued that the POCV approach simplifies ‘what-if’ analysis, since users can simply change the sigma of the process variation against which that the tool reports its results.

The trick to using the approach is to get the base cell delay data for each design corner, plus its variation, and represent it in the POCV Liberty Variation Format.

McDonnell estimates that using the POCV approach costs a 10% overhead in PrimeTime memory usage for timing updates, as well as a “slight” increase in runtimes compared to using AOCV.

Broadcom has been using POCV for four years but McDonnell says the ecosystem to support its use is still evolving.

“You need to talk to the foundry about where you will get the data,” he said.

Design tools for finFET processes

Bari Biswas, group director, R&D design group, Synopsys, summed up what the company was doing to counter the increasing process variability with which designers working with finFETs will have to contend.

These include modeling the impact of double-patterning lithography strategies, such as space-dependent dielectric variation, at nodes of 20nm and below.

To characterise finFET device parasitics, Synopsys has been using TCAD tools, as well as adjusting the way that the results are represented so there is a clear separation between the Spice model and interconnect extraction, extending the ITF syntax to include a definition of multigate devices.

The company has also been working on improving the characterisation of waveform propagation, given increasing Miller effects and wire resistances, and lower operating voltages, by improving the correlation between PrimeTime and Spice.

The handling of timing margin is also evolving, from the early OCV approach, which applied a flat, global margin and hence was most pessimistic, through AOCV, in which the margin is based on depth and hence less pessimistic depth-based margin, to POCV which uses a statistical delay distribution and so is least pessimistic.

Biswas said Synopsys had started working on finFET processes in its TCAD group in 2005, and since 2012 has been working with test chips to improve silicon correlation, IP availability and design engagement.

“This work sets the foundation for next technology at 10nm,” he added.