Charting the path for machine learning in functional verification

Machine learning is seen as a potential cornerstone technology for functional verification, reflecting that the technique is both computationally and data heavy. A new technical article surveys some of the ML strategies already being brought to bear to what remains one of the most challenging and time-consuming parts of the design flow.

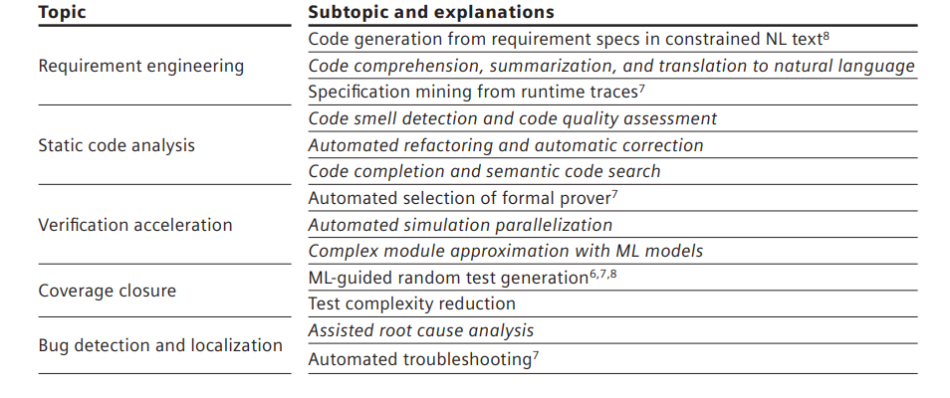

The authors, Dan Yu, Harry Foster, and Tom Fitzpatrick of Siemens EDA, look at some of the existing research and focus their observations on several key topics, summarized in Figure 1.

They are positive about ML’s potential and provide numerous examples but the main takeaway from their review is that there is room for progress and more cooperation is needed.

“Significant breakthroughs in ML techniques, models, and algorithms have been witnessed in recent years. Our paper found that very few of these emerging techniques are adopted by FV research, and we optimistically believe great success will be possible once they are used to tackle challenging problems in FV,” they write.

This comparative lack of adoption can be partially attributed to several challenges ML adoption itself presents. Foremost is a lack of training datasets and consequent and potential risks in trying to generate broadly usable models as a result.

“A model trained on data from specific types of designs, coding styles, certain projects, or some niche types of data might not perform well for other types of designs, other coding styles, other projects, or other types of data,” the analysis notes.

This challenge is perhaps most acute for industrial EDA and is joined there by two other concerns.

Model scalability faces similar problems with extrapolation: “A model might perform well for a relatively simple design. However, there is no guarantee it would work equally well when applied to a large-scale design with billions of RTL gates with code from multiple development teams spanning multiple continents. To benefit from these models, additional investment in further model compression, additional computing resources (e.g., ML accelerators or establishing MLops) might be inevitable.”

The third issue for industrial EDA revolves around data governance and transportability. Datasets have different owners, and these often impose necessary constraints on how much can be shared with other companies and organizations.

Ultimately, the authors conclude that the application of ML to functional verification is at a comparatively early stage, though there are promising applications.

To achieve that, though, larger dataset availability needs to be the first near-term goal.

“Working experience and review of many research results have indicated that the application of ML in FV cannot advance at a much faster pace without substantial, quality datasets being made available,” the authors argue.

“The paper calls for efforts to build more extensive datasets to train more complex big ML models. Such datasets will afford the FV community to make research results more comparable, repeatable, and credible.”

Click here to read the comprehensive overview of ML’s potential for functional verification in full.