Litho hotspot analysis gets machine-learning turbocharge

Samsung Foundry and Mentor, a Siemens business, are leveraging machine learning (ML) to cut the time and computational resources needed for lithography hotspot analysis.

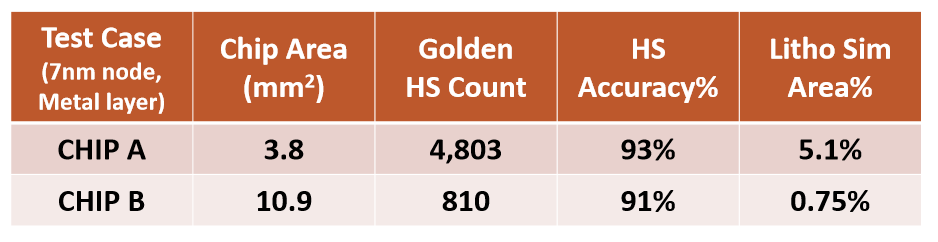

In a paper at July’s virtual Design Automation Conference, the two companies described an ML project that has already been used on two test chips to reduce the areas that required full hotspot simulation to between 5% and 1% and that succeeded in identifying 90% of the potential problems on both beforehand.

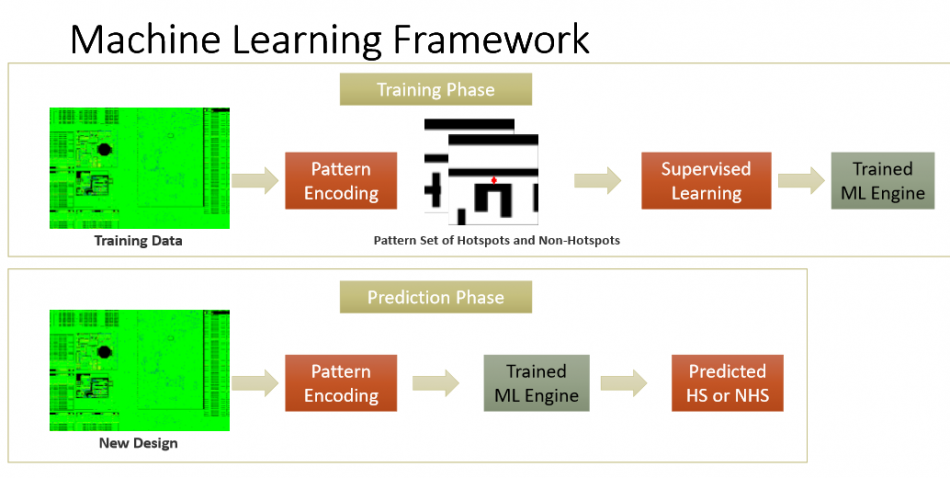

The ML system was trained using Synthetic Realistic Layout Generation for 7nm devices, with the aim of making it capable of extensive hotspot analysis and refining potential hotspot detection before real-world designs become available for leading edge process nodes (Figure 1).

The training and prediction processes for ML-based litho hotspot detection (Mentor/Samsung Foundry/DAC)

The system, which has a supervised learning structure based on a deep neural network, generates training data based on foundry inputs to Mentor’s Calibre LSG (layout schema generation) tool.

These inputs range from basic design rule checks (e.g., width, space, area, notch, end-to-end, etc) to techniques that represent an appropriate range of design style and variations (e.g., regular and wide metals of various lengths, sparsely and densely populated areas, percentages of non-preferred direction and off-grid wires, etc).

The training data also needs to include both hotspots and non-hotspots to build up the capability of the analysis that the system can ultimately develop and deploy.

Alongside a great reduction in the need for litho hotspot simulation capacity – a process that tests the capacity of server farms owned by even larger design houses – the resulting system was able to analyze and grade chips as much as 20X more quickly than for a traditional model-based analysis. More results are contained in Figure 2.

Full details of the research are available in the paper, ‘How to train your dragon before you have machine learning training data’ by Kang et al, in the 2020 DAC proceedings.