Synopsys aims at fast real-time apps with ARC HS family

Synopsys has launched its second family of ARC configurable-processor cores to use the version 2 architecture introduced in October 2011, this time aiming at high-performance embedded processing in areas such as video, security and data storage.

“These are the highest performance processors that we have ever offered,” said Mike Thompson, senior manager of product marketing for ARC processors and subsystems at Synopsys.

With the HS architecture, the company claims to be able to hit a clock speed of 2.2GHz on a 28nm process such as TSMC’s high-performance mobile (HPM), delivering around 4200 Dhrystone MIPS. Designed for embedded applications where it is likely to run alongside general-purpose processors such as ARM or MIPS, the HS architecture has not had a memory-management unit (MMU) designed for it although there is a memory-protection unit to stop tasks from overwriting each others’ memory spaces.

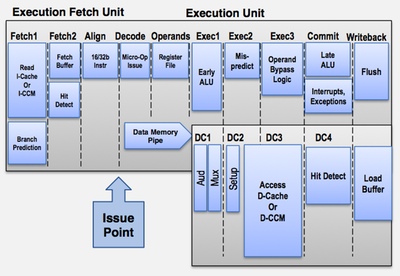

As the emphasis is on data throughput at low power, the company has opted for a relatively deep pipeline.

Figure 1 Structure of the ARC ten-stage pipeline

“The people we work with often struggle with the problem of having enough performance while also meeting their power budgets,” said Thompson. “Typically, the way to achieve performance is to go superscalar or go multithreaded. But those both add a lot of transistors. Because we are focused on the embedded space we can leave out features that become cumbersome in other architectures.”

One potential penalty of superpipelining lies in the need to have code scheduled effectively to run through the pipeline without introducing ‘bubbles’ because code cannot be scheduled to hide memory latency. The pipeline contains a novel late-ALU stage that reduces the impact of delays caused by higher-latency loads and stores on subsequent arithmetic operations. The core also performs limited out-of-order instruction completion to avoid some common causes of pipeline stalls.

Thompson said the designers redesigned the branch-prediction unit to be more accurate to reduce the number of times that the pipeline would need to be flushed when taking a an unpredicted branch, as well as adding a unit to speed up the handling of mispredicted branches.

“Keeping the pipeline full, especially with a ten-stage pipeline, is very important in the embedded environment,” Thompson said. “We also looked at what we could do at a systems level.”

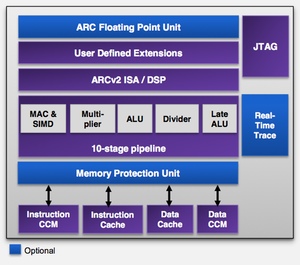

Figure 2 Block diagram of the ARC HS processor core

To support real-time applications, the HS34 version of the core can work with tightly coupled memory blocks rather than a cache. The HS36 introduces level-one instruction and data caches up to 64Kbyte in size for systems that can tolerate the lower determinism of running from cache. For high-speed I/O, the processor can be configured to provide direct access through memory-mapped registers rather than forcing all peripherals to be on an Amba bus. “We also have support for I/O coherency,” said Thompson.

Like all of the other ARC cores, those in the HS series are configurable and support custom instructions. “We have optionally a complete second register file so there is no need to save and restore registers for a context switch,” Thompson said.