Synopsys offers ASIL D ready embedded vision IP for ADAS and autonomous vehicle SoCs

Synopsys has extended its range of semiconductor IP for use in advanced driver assistance (ADAS) and autonomous vehicle SoCs with the launch of embedded vision processor IP blocks that have been given a set of safety enhancements. The DesignWare EV6x processors with Safety Enhancement Package are said to be suitable for use in automotive SoCs that have to meet the requirements of automotive safety integrity levels (ASIL) B, C, and D as defined within the ISO26262 functional safety standard.

The processor IP has hardware safety features, safety monitors, and lockstep capabilities for safety-critical designs that Synopsys says should help designers meet ISO26262’s most stringent functional-safety and fault-coverage requirements. The configurable cores integrate scalar, vector DSP, and convolutional neural network (CNN) processing units to support general processing and control, image pre- and post-processing needs, and machine-learning strategies.

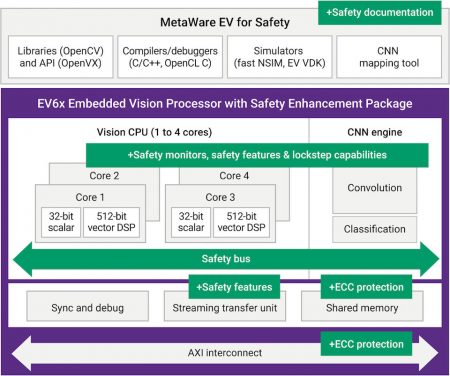

Synopsys has been gradually working through its IP portfolio to upgrade key functional blocks with features that make it easier for SoC designers to meet the requirements of ISO26262. In this latest announcement, the configurable embedded vision processor has been upgraded with ECC support on its caches and closely coupled memories, a memory protection unit, lockstep capabilities, a safety bus interface so the processor can have a dedicated connection to an external ‘safety island’ that protects the system bring-up process, a dedicated safety monitor, a windowed watchdog timer for each core, and ECC protection on the AXIbus interconnect (see Figure 1). The core can also be implemented with optional logic built-in-self-test features, so hardware or software can test its operation.

Figure 1 Safety features make the EV6x embedded vision processor IP easier to use in ISO26262 compliant designs (Source: Synopsys)

This is also backed up by enhanced safety documentation, a vital part of the ISO26262 accreditation process.

Software support includes an ASIL D Ready MetaWare EV development toolkit for safety, which provides tools, runtime software, and libraries to enable the development of embedded vision and artificial intelligence applications for the processors. The toolchain supports software development with C/C++ and OpenCL C programming languages, and open vision standards such as OpenVX and OpenCV. It also includes a tool to map neural network graphs trained on popular frameworks such as Caffe and TensorFlow on to the EV6x’s processing resources. This partitioning tool can also be used to split graphs across CNN resources on multiple EV6x processors, and to explore partitioning by other criteria such as image areas, feature maps, or frames to achieve the most efficient mapping.

One of the most striking things about this announcement is the very large amounts of performance that the processor IP can be configured to deliver. The processor can be configured with up to four Vision processors, which in turn each contain a 32bit scalar processor, and a 512bit wide vector DSP. The CNN engine can be configured to have up to 3520 multiply/accumulate units. Synopsys says, based on a post-layout simulation on a 16nm finfet process running in worst-case conditions, that the core should deliver up to 9TOPS performance from the CNN engine. The vector DSP implemented with a ten-stage pipeline should run at 1.2GHz given the same conditions.

Synopsys offers some examples of how the core might be configured to support various ADAS and autonomous driving functions. A facial recognition system that could be used to detect distracted driving might only have to reach ASIL B standards, and so could be implemented using an EV61 and an 880MAC CNN engine. However, for a safety-critical function such as pedestrian and object detection, which has to meet ASIL D requirements, the EV6x is likely to have to be configured as an EV64 (four Vision cores) and a 3520 MAC CNN engine.

Find out more

Read the white paper: The Impact of AI on Autonomous Vehicles

Watch the video: Addressing Automotive Safety Requirements with ASIL D Ready Vision Processor IP