How Google and Qualcomm exploit real world HLS and HLV

By taking a pragmatic approach, the two technology giants have comfortably adopted high-level synthesis and verification – and have shared their experiences.

A persistent myth is that both high-level synthesis (HLS) and high-level verification (HLV) should still be treated on an experimental or proof-of-concept basis. Many companies have been deploying and benefiting from both for more than a decade.

This article discusses two examples involving household names that have recently moved into or expanded their presence in the HLS/HLV world. But it also limits itself to one aspect of their efforts: their pragmatism.

The companies are Google and Qualcomm.

Both have gone on to write in detail about their positive experiences with HLS/HLV. At the end of this article, there are links to those articles that describe their experiences more fully. The point here, though, is that they illustrate the use of HLS/HLV for competitive advantage.

The benefits of high-level synthesis and verification

HLS was originally defined as a necessary post-RTL abstraction in the design flow. HLV has grown alongside it more recently.

What HLS and HLV share in common is the objective of slashing project cycle-time through the abstraction of design and verification tasks up from RTL to a C++ ‘system-based’ yet still stable environment.

That overarching goal is today achieved in a number of ways. Some of the most important include:

- Access to a growing number of fully synthesizable C models that translate directly into RTL yet are more simply defined and specified.

- The ability to perform architectural optimization early in the design flow in the knowledge that budgets for power, performance and area will be met by the resulting RTL, and also that the hardware-software split in the design can be defined early on.

- Based on the first two capabilities, the option to hand over stable models to the software development team so that it can begin coding well before RTL is available. Such models can also be shared with external partners.

- A vital response to ‘endless verification’, and in particular simulation. Stable synthesizable C-models can run through verification 50-1,000X faster than their RTL equivalents.

- The option to carry out not just high-level synthesis but also high-level verification, whereby the verification team can begin to develop stimuli and assess coverage, again well before RTL is complete.

Practical HLS/HLV strategies

Delivering compressed media over the web is obviously a big deal for Google.

Yet, the delivery of progressively more efficient codecs as well as the related IP and hardware has been a slow process. Even as image definitions for video have rapidly progressed beyond 720p and 1080p toward 4k (2160p) and with 8k (4320p) on the horizon, evolution from the H.264 standard to the High Efficiency Video Codec (HEVC, aka H.265) has taken a decade. An RTL implementation can add a further year to the IP design process.

During this time, Google has been a key member of the WebM consortium (now progressively already having its work moved into the Alliance for Open Media). WebM aimed to streamline the codec development and implementation process, in the face of burgeoning consumer demand for mobile video. Given the need for that acceleration, Google decided to adopt an HLS/HLV strategy to create G2 VP9 hardware IP and thereby make the latest standard widely available.

HLS would, the company decided, best allow it to collaborate with other WebM members in terms of both design optimization and debug. Meanwhile, the abstraction of verification tasks would allow it greater control over some of the particular challenges video compression presents – in particular a mushrooming of test vectors.

The decision paid off. Google cites the following among the specific benefits of an HLS/HLV strategy:

- Code for its 14-block IP implementation was 69,000 lines in C against an estimated expectation of 300,000 lines of RTL.

- Simulation runs during high-level verification were 50X faster than those anticipated for the equivalent based on RTL.

- WebM partners reported a simpler collaboration process with joint debug efforts running much more smoothly.

- HLS could be run on each design block in about an hour, allowing more time for exploration and optimization before the move to RTL.

- The total design and verification effort for the design lasted six months, against an anticipated 12 months had the work been undertaken in a traditional RTL flow.

Google also has this to say about about the improvements it achieved during verification: “A C-based HLS flow dramatically reduces the overall RTL verification effort because it allows engineering teams to more rapidly test each change to the source code and to share code across different hardware and software teams.

“Less low-level implementation detail in the source code enables faster simulation and quicker debug and modification. Higher simulation performance means more tests can be run to more fully exercise the source code, with industry-standard tools used to monitor an check the functional coverage provided by the test sets.”

So much for the testimony, though. What about our theme – pragmatism?

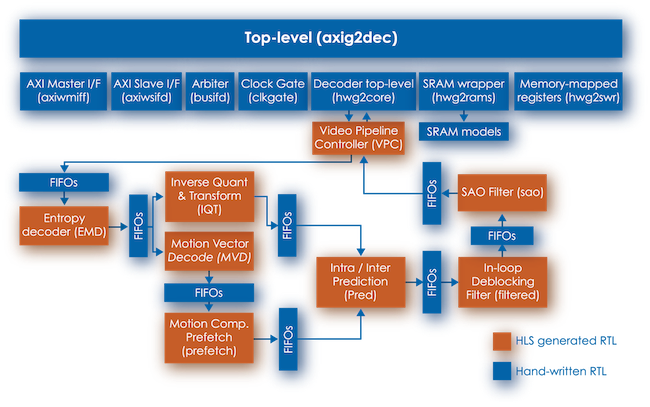

Google achieved these results after making a number of important methodology choices. Remember above the reference to a ’14-block design’.

“To manage risk on its first HLS design, the Google team decided to implement each block of the video decoder as a separate project. This flow allows multiple blocks to be optimized in parallel by different engineers while the top-level interconnect model is written by hand….

“Other blocks that contained SoC integration-critical parts (e.g., clock gating, SRAM container) were implemented in RTL.”

Google used sensible project partitioning, based on its knowledge of and increasing comfort with HLS and HLV. This was not a proof-of-concept.

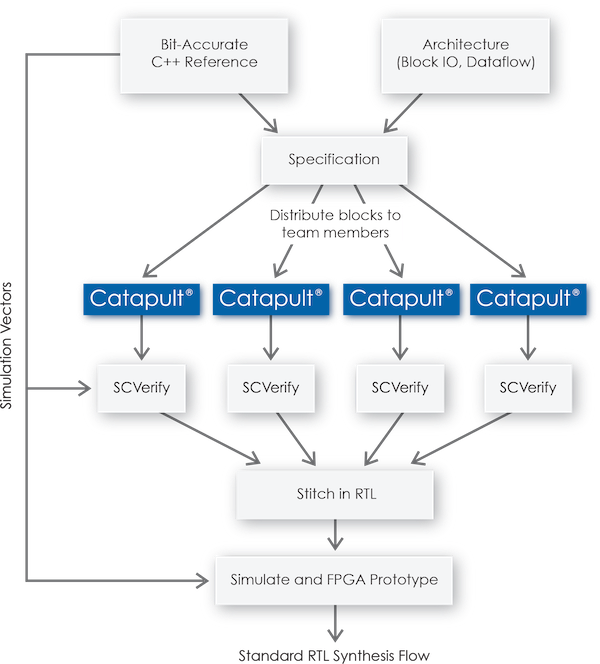

The resulting breakdown of the design partitioning and the resulting design and verification flow are show in, respectively, figures one and two. The HLS tool used to achieve this was Catapult HLS from Mentor Graphics.

Qualcomm

One part of Qualcomm has been a long-term user of HLS/HLV, that concerned with the implementation of image processing hardware. Success on those projects recently encouraged the company to look at rolling out HLS/HLV across more of its design activities. But it quickly realized that this would have to be based on a company-wide methodology.

“Developing a standardized flow is a requirement of mass deployment for any new design technology,” a Qualcomm paper states. “Without a standard flow and a deterministic way of getting design done – even where there is a very good core technology – many people will not use it because it is too new and thus too difficult for non-expert users.

“Large teams and companies must be able to share the know-how of the expert users through a standard set of procedures and by putting in place a design quality control mechanism, including metrics that designers have to meet. Also they must be able to reproduce the design and verification result exactly so that they can meet that metric requirement.”

In short, a very pragmatic set of constraints on the methodology. This has resulted in an approach that has four important elements.

-

Architectural exploration with a particular focus on power.

“HLS tools and architectural exploration enable us to get power indications early and make the optimal architectural trade-offs. In our typical RTL design flow, we do not get power numbers until very late in the design. If the power budget is blown, not much can be done without incurring a big design schedule hit.”

-

Represent everything in the C domain with synthesizable code, if possible.

“The more functionality that is synthesizable into hardware, the higher the quality of verification and the higher the quality of the final deliverable.

-

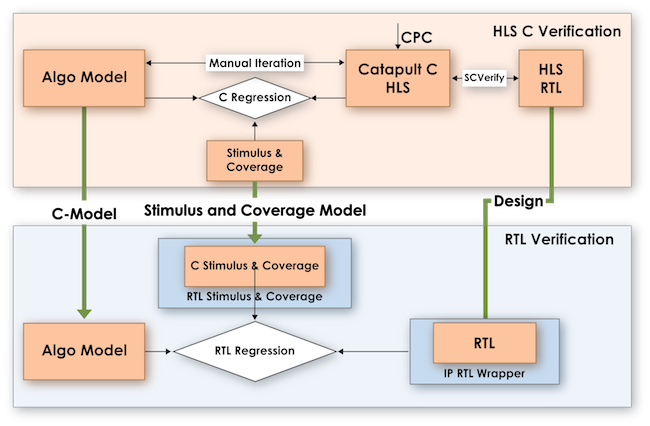

Promote high-level verification

“Our flow is verification-centric. Typically, we write the synthesizable C code and verify it before spending time analyzing the HLS synthesis reports. We can then easily iterate on the architecture from a stable starting point. This is a key differentiator from traditional RTL design where verification usually happens much later.”

-

Make it look familiar

One of the key tools used by Qualcomm to roll out its HLS/HLV strategy has been Catapult CPC (C-level property checking) from Mentor Graphics.

“CPC gives people new to the C domain a way to come on board and immediately become experts, because CPC has that experience embedded in it. From an assertions perspective, CPC reduces the state space by doing assertion proving…. Furthermore, assertion proving is a technology and methodology that RTL engineers are familiar with, which is another way it facilitates the move up a level of abstraction.”

The ‘To Do’ list

Why are companies like Google and Qualcomm willing to stand up and discuss the benefits of HLS/HLV, and, perhaps more important, talk about how they implemented these strategies? Why give up competitive advantage?

In part, there remains some work to be done in the HLS domain around standards and best practices. It also helps when big players urge on the EDA community to continue to provide and improve tools. And, even though it is more than a decade since HLS made its debut, most companies are still only a certain way along the learning curve.

Again, look at the very pragmatic strategies that these two major players are rolling out and refining. And they are getting results.

Further reading

As noted at the beginning, here are the two papers in which Google and Qualcomm discuss their experiences in greater depth. This article is inherently indebted to their authors.

“Designing ASIC IP at a Higher Level of Abstraction” (Qualcomm)