Knock down the wall to SoC integration

SoC integration can be accelerated by using virtualization to make the benefits of emulation more accessible to both hardware and software engineers.

Software engineers are too often treated as an afterthought in the SoC integration and verification process, in which hardware-software integration remains a rigidly serial process, despite software’s increasing domination of the overall system function. Traditional verification technologies that are supposed to enable such integration actually prevent it.

System-on-chip (SoC) verification environments have not been very software-aware, limiting the productivity and quality gains available from better hardware-software integration. Software engineers face the same time-to-market pressures as their hardware counterparts, and sometimes greater ones if design-in customers want early access to the chip. We must knock down the wall between the two disciplines.

There is now a productized technology that can help do just that by making hardware models available early to software engineers. Hardware and software can be designed, verified, and improved in unison, boosting productivity.

The Great Wall

FPGA prototyping

FPGA prototypes are widely used but impose a serial flow. The software team cannot get a development board until the RTL is finished. If the design is a moving target and is a multi-processor SoC with more than a few million gates, this inserts unnecessary delay into HW/SW integration.

Then, when designs become too large to fit onto one FPGA board, mapping the RTL becomes a real problem. The typical performance of FPGA software means it can take many hours or days to compile and then place-and-route a design on the full prototype. ECOs expand the turnaround time further before verification can resume.

Add to that the difficulties in gaining enough visibility into a design for effective debugging with standard FPGA verification techniques, and the whole process becomes yet more difficult and unproductive.

Accelerated simulation with VIP

Accelerated simulation using verification IP speeds up hardware design. But it does not bring the software and integration teams into the loop.

Although testbench-based simulation environments (e.g., SystemVerilog/UVM for hardware verification) are ideal for testing and debugging RTL IP, software developers cannot easily run their tests and use their tools concurrently in this environment. We need an alternative approach to system-level verification.

Emulation

Emulation can make a difference, but in-circuit emulation (ICE) effectively strands the emulator in the lab. Access becomes a special privilege, largely confined to hardware engineers. Software teams get very limited access or companies must buy and maintain multiple emulator setups.

Multiple emulators—each perhaps needing multiple hardware speed adaptors to model protocol host and peripheral connections—fill the lab with fault-vulnerable cables, present pin limitations, and incur high costs.

Fenced in

Thus, there remains an unnecessarily high wall between hardware and software engineers: different verification silos; different languages; related but disconnected problems.

The verification process must reflect the importance of software, since it constitutes a large and increasing share of any SoC, is updated more frequently than hardware, and may be being worked upon by ten times as many engineers as the hardware.

In consumer electronics, hardware will often undergo a respin every few months. This leaves almost no time for software teams to do their work if they need to wait for hardware prototypes.

Tear down the wall

A new virtual lab use-model for emulation helps tear down the wall, shortens development time, and enhances verification robustness.

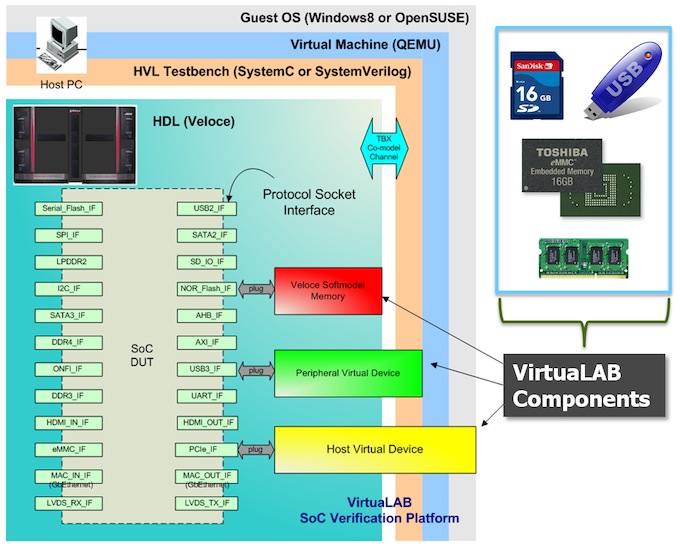

It replaces physical hardware models with virtual peripherals that run target hardware protocols in software, moving the emulator out of the lab and into the datacenter. The emulator can be accessed like a server by everyone in the company. Virtual labs provide stable 24/7 emulation environments in which hardware and software meet and evolve together.

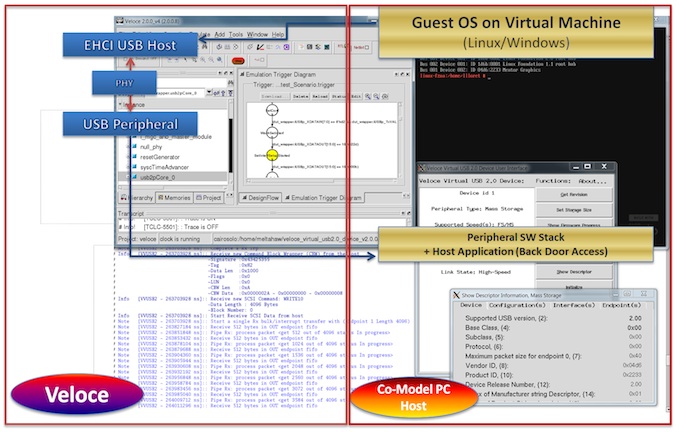

Figure 1 With VirtuaLAB, the software environment host and peripheral protocols run in software (Source: Mentor Graphics)

Virtual models can be connected or disconnected in software by choosing a particular configuration of a compiled design under test (DUT) rather than by connecting different pieces of hardware with cables. The emulator can be accessed simultaneously by software, hardware, and integration engineers without disruption. This approach can be applied both to a single project and to multiple projects running at the same time.

Where ICE typically provides access for a handful of engineers per emulator, a virtual lab can provide access for hundreds. That’s a dramatic expansion of a valuable resource.

With 24/7 remote access and lower downtime due to fewer physical connection issues (no more kicked cables or running out of pins), the cost-benefit advantages over ICE become even greater.

Plus, because users can run host connections through a virtual machine, they don’t have to worry about crashing the verification process if they address a memory that does not yet exist.

With a virtual lab, the global software team gets access to hardware while it is still in RTL, running against multiple peripherals, on a single emulator. Developers can target it from remote PCs, using the real OS, drivers, stack, and applications rather than hardware targets running on speed adaptors.

A virtual lab using independently validated protocol models, and running on a Mentor Graphics Veloce emulator, brings the SoC verification environment closer to the real, externally-connected one with which the target device will ultimately interact. The protocol models are the same IP and same software, delivering the same functionality as ICE-based speed adapter solutions. But they are now delivered as software products, or Veloce VirtuaLAB components.

Figure 2 VirtuaLAB components provide models for Flash memories, DDRs, USB, PCIe, Ethernet, SATA, HDMI, and other AV standards (Source: Mentor Graphics)

As more embedded software is verified with hardware in virtual labs, the productivity of SoC verification immediately increases. Going forward, the technique will raise our understanding of how software debug can be improved, and thereby also improve the efficiency of hardware-software integration.

Further enhancing the virtual lab

Multicore SoCs are encountering limitations in traditional hardware-based debug connections, such as JTAG. This is also driving interest in software-based alternatives.

JTAG is intrusive because it suspends the target processor during interactive debug, performing thousands of additional clock cycles while the state of the rest of the design advances. In a multiprocessor design, this means other processors continue clocking normally, becoming out of sync with the target processor. What is debugged would not occur in the real software-hardware environment, rendering the process ineffective.

Mentor Graphics’ Codelink software provides a non-intrusive debug connection that passively streams data from the design (i.e., monitoring rather than interrupting the process as JTAG does). Codelink offers a more transparent way of performing multiprocessor design debug, and the virtual lab is a key enabler both for using it in emulation and delivering it to teams worldwide.

Conclusion

The virtual lab method puts emulation into the datacenter, providing server-like access. It is all about bringing software engineering into the heart of verification. Software and integration engineers have been knocking on the wall long enough; it’s time we knocked it down.

Author

Richard Pugh is the product marketing manager for Mentor’s Emulation Division.

Contact

Mentor Graphics

Corporate Office

8005 SW Boeckman Rd

Wilsonville

OR 97070

USA

T: +1 800 547 3000

W: www.mentor.com