Streamlining software development for a hardware ecosystem

Software accounts for more than half the development cost for a complex system-on-chip (SoC) platform at the 45nm process node or below. The availability of fundamental software such as compilers, debuggers, operating systems and industry-specific middleware determines the success or failure of a chip design.

In simple terms, if there is little or no software support for an SoC at launch, it is unlikely to find many early adopter OEMs, no matter how interesting the intellectual property integrated within it is. Leading SoC vendors must help their customers streamline and improve the software development process.

One very good response to this software challenge is to use simulation technology or virtual platforms. For the purposes of this article, we define a virtual platform as “a piece of software that works like a piece of hardware, running the same target software stack as would the physical hardware.

The classic use case for a virtual platform is to enable the development of software before hardware is available in physical form. A virtual platform can be created early and quickly using product specifications, making it available to use long before the first chip samples and early development arrive. It can also be shared far more widely than a physical prototype. A virtual platform is ‘just’ software and thus can be copied and used in any number of instances at any one time.

Every engineer working on a target system can have a prototype on the desktop, no matter where they are in the world. No more need for designers to fight over a limited supply of hardware. Indeed, partners and customers can also be brought into the development process easily, where desirable. All these factors greatly facilitate early-stage, large-scale software development. This in turn allows complex systems-on-chip (SoCs) to be launched with a full software ecosystem in place on Day One, as both OEMs and system solutions customers increasingly demand.

A recent and successful example of this strategy was the June launch of Freescale Semiconductor’s QorIQ P4080 processor. It was accompanied by the release of a set of software packages to run on an accompanying virtual platform. The packages included proprietary and open source operating systems (OSs). Support for hardware-accelerated processing of network traffic using third-party middleware was also demonstrated, making use of new datapath acceleration features on the processor. The partner companies that developed the software packages received a Virtutech Simics P4080 hybrid virtual platform only a few months ahead of the announcement. The general consensus was that the partners could not have delivered running code alongside the processor launch if the platform had not been available.

Virtual platforms are even more valuable for chip designs that break new ground. The ongoing transition to multicore processors requires that most real-time OSs be rewritten to accommodate various symmetric and asymmetric multiprocessing (SMP & AMP) configurations, and include combinations of these two modes on a single SoC.

Using a Simics virtual platform, OSs for the eight-core P4080 were not just ported to the new chip, but also transformed into multiprocessor operating systems. Virtutech Simics virtual platforms had also already been used successfully to support ecosystem development of Freecsale’s first generation of multicore Power Architecture-based dual-core processors, including the MPC8572E PowerQUICC III device and the high-performance MPC8641D processor. The Simics contribution there included virtualization of the standard hardware development systems that accompanied these embedded processors.

Virtual platforms also make it easier to support the development of software for the OEM hardware vendor, since bugs and suspicious issues are easy to reproduce. One of the hallmarks of a good virtual platform is the predictable or deterministic behavior of the system. The virtual platform provides a system free from random instabilities, with strong versioning support for all the hardware components involved. It also lets users capture the complete hardware and software setup of a system as a checkpoint, and pass that to the hardware vendor (or the other way around) for consultation.

Customer design-in

When the fundamental software is available very early, the customer for the impending chip can begin software development and system integration well in advance of silicon. Using a plain virtual board running an OS, the customer can test existing internal software stacks, and also explore how its software can exploit features of interest such as hardware accelerators or heterogeneous processing cores.

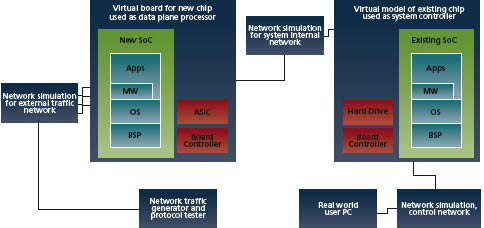

Thus, once a customer commits to a new chip, engineers can start building virtual boards and perform what is in essence a virtual design-in. This can involve extending the virtual platform with models of custom ASICs or FPGAs, or of standard parts to be used in the final board design. One interesting use case is when a customer already has a virtual platform for existing systems, and integrates that with the new virtual board. This often occurs for rack-based systems, where multiple generations of hardware have to coexist. This type of integration is shown in Figure 1.

Source: Virtutech/Freescale

FIGURE 1 Integration of new and existing virtual models

Virtual platform technology

Modern software packages are huge. They require billions of instructions to be processed, booted, configured and tested. A virtual platform therefore has to be very fast at executing code. Booting a virtual platform should take minutes, not hours or days. The key to meeting this target is the use of transaction-level models and approximate timing.

In our experience, virtual platforms that use a constant time for each target instruction and do not model the detailed latencies of memory and device accesses are the best fit for large software development efforts. They provide the needed execution speed with sufficient hardware timing to make the software run as well as it runs on the physical hardware.

Streamlined fast models also have the advantage of being far easier and faster to create. The information content is less, as fewer implementation details are present, and that significantly reduces the size and complexity of the code. A good rule of thumb is that a timing-accurate model is at least ten times more complex than a fast model, and takes about ten times as long to write. Fast models can be created using only a specification of the programming interface for a device, with no need to wait for the actual implementation to be finished. Fast models are also more reusable, as they do not have to be changed because of alterations to the implementation of a device.

If every hardware model and its supporting software had to be developed from scratch, delivering any virtual platform would take a very long time. Thankfully, almost all new chip designs reuse many elements and this allows virtual platforms to be built around existing device models. You can often make hardware drivers and firmware code reusable by simply adjusting the memory map in an existing board support package (BSP). Also, because of the layered nature of modern software, once an OS runs on a particular chip, most software targeting a similar processor and OS will also run on it.

Early virtual platform drops to OS developers do not have to be complete or definitive. As soon as the basic components required to support an OS boot are in place, fundamental software can start. Typically that means a set of processor cores, an interrupt controller, a serial port, memory and possibly an Ethernet connection. Such a simple platform will allow an OS kernel to be ported, paving the way for adding more BSP details and running software.

These factors mitigate the ‘big-bang’ problem in hardware-software integration, where all the system components have to be in place for anything to work. Rather, it is possible to develop and test the hardware-software integration in increments, reducing risk and development time.

Performance analysis

Streamlined virtual platforms are very suitable for getting software up and running, making sure it is functionally correct, and that algorithms and protocols scale as expected. Sometimes, however, developers need detailed benchmarking data on how code runs on a particular hardware platform, or the real-time performance of code has to be validated. To accommodate this need, hybrid models are in development combining fast models with detailed cycle-level performance models.

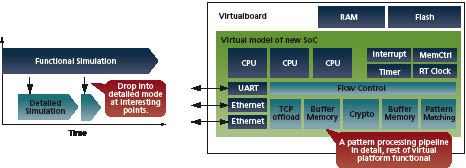

Source: Virtutech/Freescale

FIGURE 2 Changing models

Hybrid models are even more useful during the transition from designing multiple single cores to designing integrated multicore solutions. A good example here is the P4080 platform, which has an innovative multicore microarchitecture aimed at boosting computing efficiency, performance and scalability.

When using a hybrid solution, the fast functional model is used to run the software load and get the system into a state that is of interest. Then, you can change a part of (or the whole) system to use detailed models. Figure 2 shows the concepts of changing over at various points in time, and mixing models at different levels of detail within a single simulation. This is the only reasonable way to gather performance details from large software loads, because otherwise running billions of instructions in detailed mode would take days.

The detailed, cycle-level models will arrive later than the fast models, as they take longer to create and depend on more of the hardware design being complete. However, this should not impact the availability of target software, as the first step is always to get the code to run correctly. Later, once the code is stable, detailed performance analysis and optimization can be performed. The ‘first make it work, then make it fast’ methodology leaves room for the development of detailed models while customers and partners are using the fast models to get target software in place.

Virtual platforms can be used to provide detailed performance analysis and critical debug after reference or design targets have been developed. The combination of virtual platform and SoC platform design enables rapid use of these advanced capabilities so that your ecosystem partners can begin integration well in advance of completion or sampling of the chips. This gives users a marked advantage in terms of time to market and time to revenue.

Virtutech AB

Drottningholmsvägen 14, 3tr

SE-112 42 Stockholm

Sweden

T: +46 8 690 07 20

W: http://www.windriver.com/products/simics/

Freescale Semiconductor Inc.

6501 William Cannon Drive West

Austin

TX 78735

USA

T: +1 800 521 6274