Seven habits of highly effective virtual prototypes

Virtual prototyping is not a new technique, but the advent of transaction-level modeling and an increased focus on seven key requirements for their effective use means that today’s versions are much more broadly applicable and comparatively future proof.

The share of key functionality implemented in software running on processors continues to grow in new designs. No longer dominating just laptops and PCs, software reigns in communication, networking, and automotive devices, and embedded software is found in many consumer devices. With off-the-shelf platforms providing the foundation for modern designs, it is software combined with selected hardware accelerators that differentiates one product from another. The growing importance of low power consumer and green devices is one reason for the increase in software processing units. Modern low power design techniques have built-in facilities to control power, but embedded and application software have “smarts” that add the use case context and determine how and when appropriate power control techniques can be applied. In addition, optimizing software for the processors it runs on can also help reduce power consumption.

How well software and hardware interact defines a device’s key performance, power consumption, and cost attributes. Integrating and optimizing software after hardware has been already built is no longer an option; nor is the common practice of validating hardware and software in isolation. Software and hardware interactions must be validated before either set of architectural decisions is finalized.

Virtual prototyping gives software engineers the ability to influence the hardware specification before the RTL is implemented and reduces the final HW/SW integration and verification effort. It also provides significant benefits over hardware prototyping by using high-speed abstracted simulation models of the hardware. Virtual prototyping enables software engineers to use their software debugger of choice. It facilitates the debugging of complex HW/SW interactions by providing simulation control and visibility into the hardware-states, memories, and registers. And it provides a comprehensive set of analysis capabilities that allows engineers to optimize the software and improve how it controls the hardware to meet performance and power goals.

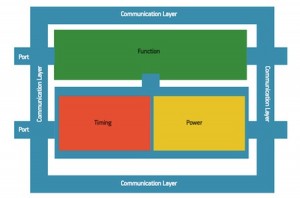

Figure 1

Scalable TLM power model and power modeling policies. Source: Mentor Graphics

Early virtual prototyping modeling techniques did not address today’s challenges. Most ran software against loose, proprietary mockup models of the hardware, providing a programmer’s view of the hardware to the software routines. They allowed partial validation of the software functionality against the hardware register address space, but had limited capabilities when it came to validating the functionality of an entire device. To overcome that limitation, these virtual prototypes attempted to provide additional cycle accurate models that represented the functional behavior during each clock cycle, but at a faster speed compared to RTL.

Where the programmer’s view did not contain sufficient granularity of the underlying hardware to completely validate the software, cycle accurate models required a modeling effort close to that of writing the RTL, and frequently suffered from insufficient simulation performance to run software application code. Evaluating either performance or power under software control using either technique was generally impractical. Reuse of these models to produce virtual prototypes outside the framework of a single vendor environment was impossible due to the proprietary, closed nature of the model interface. Reuse in downstream flows, such as RTL verification, was non-existent.

To overcome these issues, today’s more advanced virtual prototyping technologies, such as the Vista virtual prototyping technology from Mentor Graphics, should have seven key attributes that enable them to address current and future design challenges.

1. Industry standards

Advanced virtual prototypes are composed of transaction level models (TLMs) that abstract functionality, timing, and communication. The SystemC TLM2.0 standard allows these models to be reused from project to project and makes them interoperable both among internal design teams and across the entire industry. Industry-compliant TLMs can be run on any industry-compliant SystemC simulator without requiring proprietary extensions. In addition, TLM2.0 contains specific enhancements that enable very efficient communication for optimal simulation speeds.

2. Platform modeling (LT/AT)

A platform modeling strategy not only defines the level of investment to create the platform but also the capabilities provided to the end user. A scalable TLM-based methodology separates communication, functionality, and the architectural aspects of timing and power into distinct models. Such a model can run in a loosely timed (LT) mode at a very high speed—or it can switch to an approximately timed (AT) mode for more detailed performance and power evaluations under software control. When modeling power, AT mode allows engineers to associate power values with transaction-level computation and communication time and consider the power state of each model. LT/AT switching can be facilitated during run time to adapt to the software mode of operation, such as a boot versus running application code.

3. Processor modeling (JIT)

Processor models that run the embedded software are at the heart of any effective virtual prototype. These models usually determine the overall simulation performance of the platform, depending on their modeling and communication efficiency. Just In time (JIT) modeling allows the embedded code to run most efficiently on the host by allowing the target processor instruction code to be natively compiled into the host processor instruction code structure when needed. This is done while preserving thread safety and correctly supporting multiple instances of the same processor type or different processor types on the host.

4. Virtual prototype creation

This process assembles the individual industry-standard-based processors, peripherals, buses, and memory TLMs into a virtual platform capable of executing software natively. The platform producer can use the TLM block diagram capability to define the design topology by connecting graphical symbols representing each TLM. Similarly, topology changes can be implemented quickly by interactively changing the connections. Saving the topology can automatically generate the complete virtual TLM platform model used to run the software on the embedded processors. A virtual prototyping compiler can produce executables in sufficient quantities to serve large software design teams. Such an executable provides the level of hardware visibility and control needed to integrate, validate, and optimize OS and application code against hardware.

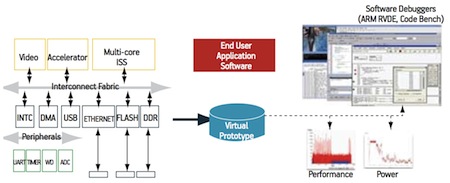

5. Integrated HW/SW debug

As software is running on the virtual prototype, it is important to provide the right level of visibility into the hardware states. Software engineers are used to running their favorite software debug tools, such as off-the-shelf GDB, ARM RVDE, and Mentor Sourcery CodeBench. An integrated debug environment on a virtual platform allows them to use their preferred debuggers to validate, debug, and optimize the software using standard hardware visualization techniques, such as state, memory, and register views of the hardware. Simulation control of the software and hardware—such as single stepping through software advancing simulation time—is needed. So are stop, checkpoint, and restart capabilities.

6. Performance/power analysis

As the software controls the hardware’s modes of operation and use models, it is important to perform software optimization to meet device performance and low power goals. This can only be accomplished by using performance analysis graphs, such as data throughput, latency, and power, that display hardware dynamic power for each software routine executing on the platform. The software designer can see the direct impact of software changes on the virtual platform’s performance and power attributes.

7. Optimization across multiple cores

To maximize performance and lower power consumption, software is partitioned on multiple cores to provide the best throughput for the desired functionality. However, over-partitioning may reduce performance due to increased inter-processor communication or competition when sharing common hardware resources. Inversely, underutilizing the processor/core resources will result in sub-optimal performance. Thus the virtual prototyping solution must have the capabilities to conduct “what if” analyses to determine the optimal hardware configuration and software partitioning for differentiated designs.

Mentor Graphics’ Vista hardware-aware virtual prototyping solution has all of these key attributes, enabling early validation of software against the target hardware, reducing the HW/SW verification effort, and easing the creation of post-silicon reference platforms. Virtual prototyping can be conducted at a much earlier design stage than physical prototypes—even before any RTL is designed—increasing productivity and shrinking time-to-market.

Figure 2

Creating a virtual prototype. Source: Mentor Graphics

Because virtual prototypes are highly abstract, the code representing the hardware is much smaller and simpler. Thus, virtual prototypes simulate orders of magnitude faster than RTL code, capturing bugs manifested in complex scenarios that are impossible to simulate at the RTL stage and making debug much easier. Further, TLM platform models can be used as golden reference models, reducing the time to construct RTL self-checking verification environments.

Even after the device and chip are fabricated, virtual prototypes provide post-silicon reference platforms for simulating scenarios that are difficult to replicate and control on the final product. They also give visibility into internal performance, power, and design variables not reachable within the physical chip. Virtual prototypes can be used for isolating field reported problems and for exploring and fixing a problem through software patches or design revisions.

Advanced virtual prototyping allows validation of the entire functionality implemented in both hardware and software. When in AT mode, virtual prototypes run several orders of magnitude faster than cycle accurate platform models, yet they still achieve a sufficient level of accuracy to support comprehensive performance and power optimization. When running in LT mode, virtual prototyping allows software engineers to quickly run the application, middleware, and OS code at close to real-time speeds against a complete functional model of the hardware. Switching between AT and LT modes during run time allows the virtual prototype to be used effectively at any time during simulation.

Vista platform models give software engineers the ability to influence the hardware design before the RTL is implemented, reducing the final integration and verification efforts. Vista can also create an executable specification that can be provided to a large number of software engineers so that they can validate their application software against the hardware during the pre-silicon design stage. This executable can even be given to field engineers as a reference debugging platform during the post-silicon stage, after the product has been sold to customers.

As the amount of functionality implemented in software running on multicore processors continues to grow, how well software and hardware interact defines device performance, power consumption, and cost attributes. Advanced, hardware-aware virtual prototyping is the best way to optimize these important attributes and enable concurrent hardware/software development throughout the design flow.