Back to basics – doing formal the right way

A lot of attention has been given to formal apps over the past few years, and for good reason — they deliver the power of formal analysis in focused areas, allowing users who are not familiar with formal techniques to solve some tough verification challenges.

As usage of the apps has increased, new users have become interested in property checking and want to know how it can benefit their verification tasks. However, formal property checking is not a formal app, and it is important to understand how to use it to increase the likelihood of a successful outcome. So it’s a good time to go back to basics and outline approaches for doing formal verification the right way.

Make a plan

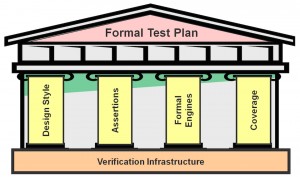

Successful verification starts with a testplan, and formal verification is no different. There are four elements that should feature prominently in every formal testplan:

- Design style

- Assertions

- Engines

- Coverage

Figure 1 “The right way” to do formal verification rests on the four pillars of a successful formal test plan (Source: Mentor Graphics)

Design style

Formal property checking has capacity limits — the number of states that can be modelled — so you need to consider the style of design you choose to verify. As not all of parts of an SoC will be verified using formal techniques, you want to focus on the parts that are best suited to the approach. The sweet spot is control logic and blocks with high levels of concurrency.

Data paths can be separated into data transports and data transforms. Transports — such as a PCI data link level — can be verified with property checking, while data transforms are typically beyond the scope of property checkers. Selecting the right block to verify with formal techniques is key to ensuring a successful outcome.

Assertions

Assertion creation should start with a good specification for the function of the block under test; they should not by written by looking at the RTL. Writing RTL-based properties will test the implementation, not the functionality. Users often try to express a complete requirement in their chosen assertion language, leading to overly complex property expressions that can choke the formal engines. It is often more efficient to express the requirement in a mix of assertion code and supporting RTL modelling code.

One approach for writing properties is to start with the requirements at a high level and then repeatedly decompose these into sub-requirements. The sub-requirements will eventually be simple enough to express as assertions. There are several benefits to this approach:

- The assertions will be simple enough that you will be able to verify them.

- Once lower-level assertions are proven, you can use assume-guarantee approaches to prove higher-level assertions.

- Traceability from the highest requirement to the lowest level of verification allows a demonstration of how each requirement was verified.

Engines

Formal engines are the algorithms that determine whether the RTL behaviour is correct compared to the properties you have written. Today’s commercial property checkers have many engines, each suited to solving a different class of problem. So which formal engine should a user pick for any one job?

The answer, in most cases, is to allow the tool to choose, based on efficient engine orchestration. Orchestration is the ability of the engine to communicate, share data, and use heuristics to understand the properties and the design while ensuring no property becomes starved of compute cycles. While tools do allow users to select specific engines, efficient default orchestration ensures that these dedicated settings are required for only a minimal set of properties.

Good orchestration also ensures that set-up doesn’t become stale. Scripts are often re-used from one project to the next, but specific engine settings for your project today will not necessarily be the most efficient for your next project. Avoiding the use of specific engine settings can ensure you get better results each time.

Coverage

There are typically two types of usage for formal verification— bug hunting and assurance. Bug hunting enables the user to pour a large number of properties into the formal tool in an attempt to uncover any latent bugs. Inconclusive results are not a worry, as proofs are not the goal. Achieving full proofs is the aim of an assurance flow, in which the complete specification for a function is captured in properties and then verified using formal. Yet how do you know you have enough properties to verify the complete behaviour? This is the role of coverage.

While using requirements-based property creation (described earlier) helps, there are three primary coverage metrics that can assist you in ensuring that none of the RTL is missed. The simplest of these is assertion density: simply measuring the number of assertions per 100 lines of RTL code. An acceptable number may range anywhere between 1 and 10 assertions.

The second metric involves measuring the minimum sequential distance (MSD). MSD allows you to detect how close to a property any one flip flop is. If a particular flip flop is several sequential steps from a property, it may require further properties to increase observability. MSD also will highlight sequential elements not observable by any property.

The third metric uses a cone-of-influence analysis to report the logic that is functionally covered by an individual property. Analysis of the full property set can then determine whether any RTL functions remain unchecked.

While it’s true that some designs will still require more advanced formal techniques, recent advances in formal property checking have made this capability accessible to more verification teams than ever before. Whether you are a formal expert or a novice, following these fundamental planning considerations can help ensure your team’s success.

For more on this topic, you can view a video of Mark’s presentation at the Formal Verification Conference, May 21 2015, in Reading, UK, here.

Author

Mark Handover has been involved in the design and verification of complex SoCs for 20 years. He currently works as an applications engineer with Mentor Graphics, focusing primarily on the areas of formal and assertion based verification.

Mark Handover has been involved in the design and verification of complex SoCs for 20 years. He currently works as an applications engineer with Mentor Graphics, focusing primarily on the areas of formal and assertion based verification.

Mark Handover has been involved in the design and verification of complex SoCs for 20 years. He currently works as an applications engineer with Mentor Graphics, focusing primarily on the areas of formal and assertion based verification.