Are you formally secure?

How do you know whether your smartphone, TV, or car is secure? Is it even clear what you mean by secure? It is easy to find examples of what is not secure, e.g. when someone can get hold of your password or credit card number, or access your car and control some aspect of it. An obvious, but impractical, definition says that these types of intrusions are impossible. However, security must be defined in a way that can be verified.

Many strategies are used to build secure systems that try to achieve this, such as ARM TrustZone, software privileges, execution levels, and data encryption. A lot of the strategies are software based, but no matter how secure software is, if there is an backdoor in the hardware allowing un-privileged software access to secret data the system is not secure. Hardware security issues are even more critical. You can’t update a system on chip (SoC) after the product is shipped to the customer, so it is essential to find any security issues before tapeout, during functional verification or even earlier.

What makes hardware secure?

The security breaches described above can be classified as either data leakage, or data integrity issues.

Data leakage means that secret or private data, such as a stored password, is accessible to someone who is not authorized to read it. A data integrity violation means that secret data can be written or modified by someone who is unauthorized to do so. A data integrity breach might then involve your password being over-written with a new one, or software writing to registers in your car’s engine control unit to make it act in unintended ways.

An SoC that is storing or processing secret data can be divided into areas that hold secret information and areas that are publicly accessible. In general, hardware is secure if data cannot cross between secure and insecure areas. More formally, this can be defined as: the chip is secure if the non-interference property holds. This means that any sequence of insecure input values is unobservable in the secure area, and any sequence of secure input values is unobservable in the insecure area. If both of these are true then data cannot be transferred between the two domains.

Secret data can be protected by encryption. Encrypted data can be stored and transmitted in ways that can be accessed by anyone. The data the encryption is protecting remains secret so long as the encryption key remains a secret. Hence the key must remain inaccessible at all times.

Another way to protect secret data is to store it in a location that can not be accessed illegally. For example, to store a credit card number on chip, it can be encrypted and stored in any memory, or it can be stored in an access-controlled memory (“secure element”).

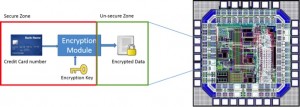

Figure 1 Verifying the security of key storage is easier in small devices than complex SoCs (Source: Synopsys)

Consider the design above. A credit card number is encrypted and stored in memory somewhere in the middle of the chip. It is secure if the credit card number and encryption key are not accessible anywhere else on the chip. If someone could write their own key and then encrypt the credit card number, it is not secure since the key is known. Verifying that the small circuit on the left is secure is easy, but when it is embedded in the middle of a complex SoC it becomes a lot more difficult.

How do you verify that the hardware is secure?

An excellent way to verify the security features of an SoC is to use formal verification. Non-interference properties of a design can easily be proven by a formal tool. Any sequence of inputs, or values of the encryption key should never be observed in non-secure areas of the chip such as primary outputs, memories or registers. There should also not be any sequences of non-secure inputs that could overwrite the encryption key.

Of course, in a real system, secure data will be used by other modules or processes, and it must be possible to update the encryption key. These accesses are intended, and if properly designed do not compromise the security of the chip. However, the complexity of an SoC means that there are likely to be bugs and unintended ways to access secure data.

The discovery of this sort of issue lends itself very well to formal analysis. The strength of formal approaches is that they can verify all possible values at once and guarantee that unintended data leakage cannot occur. This makes formal verification an important element of functional verification flows to ensure hardware security.