Applying some perspective to DFM

So far, the debate over design for manufacturing (DFM) has featured contributions from, principally, four groups: designers, manufacturers, EDA vendors and the consultancy community. It is becoming increasingly apparent that some other voices need to be heard and their positions integrated within any successful semiconductor DFM chain.

One such group is fab equipment suppliers.

The biggest player in this field is Applied Materials. The good news is that it is already supplying valuable DFM tools (see box) and, through executives such as Michael Smayling, CTO of its Maydan Technology Center (MTC) research facility, continues to confront the DFM challenge on a daily basis. In his Santa Clara office, Smayling says he is analyzing a constant flow of test structures that has been run through the MTC.What information comes out of the MTC matters for a number of reasons, but perhaps the most important is that, by its very nature, it is ahead of the game.

“Often, what we are doing here is years ahead of its time.We are working with experimental equipment aimed at process nodes that are still some way from volume production or even a ramp,” says Smayling. “Some of the equipment will make it to market and some will prove not to be commercially viable – that’s the R&D we have to undertake. But, in working with that equipment for node n and node n+1, you inevitably need and get a view of problems that lie ahead.”

For example, Smayling notes that his company often works with EDA vendors running their often similarly prototype tools through the MTC. “And in one recent case, we had a shrink-wrap tool that incorporated traditional and DFM techniques, and it presented a new set of unexpected problems. Two or three things broke the extraction models and we were getting error rates of up to 25%.What it shows is that you have to stay close to the silicon, and look at the test wafers every day.”

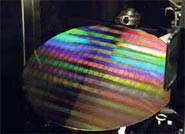

Figure 1. Running the right test structures is critical.

No one who has followed the DFM debate will be surprised by the evidence here that new forms of systematic defect are emerging at lower geometries. Such defects have been overtaking random defects since the industry’s bleeding edge passed 130nm. “In the era where we were patterning the wafer with feature sizes that were above the wavelength of light, we could extrapolate some of the issues we were going to encounter,” says Smayling. “In the sub-wavelength era, however, the results are non-linear.

“But, while we recognize that, I think we have to look to how we are addressing the task. Look at test. You can adopt two different techniques and get two completely different sets of results. The lesson is that if you don’t create the right test structures, you are not going to learn the right things.

“You need to keep your eyes open and be creative. You need to apply formalisms like FMEA [Failure Mode and Effect Analysis], understand the issues in the design space and capture the results.” In blunter terms, Smayling believes that: “There’s a lot of talk on DFM, but there’s less listening. And we’re hearing a lot more marketing, rather than actual physics and research.”

Staying with test, he illustrates another scenario in which gaps in learning – and, therefore, the wrong kind of learning, present an obstacle to the development of DFM strategies.

“Test is a process in the manufacturing sequence as much as any other.We have been willing to increase the die-size by a few percent to build in design for test, so why don’t we invest a few percent in test itself and improve the quality of the knowledge we are getting from it?” asks Smayling.

“Right now, the emphasis is on product test as in test-until-fail, or on test as a way of catching devices before they ship. But what if we can add some test vectors and get an analogy of the character of the chip that we can go back and validate against the process? That could have an enormous impact – and if you want to have these DFM yield models, they should include product test.”

The catch here, of course, is who ‘pays’ for that?

“Again, we hear people talk about the importance of DFM, but, in many cases, they have not faced up to the reality that some costs are going to go up, if we want to avoid ongoing problems with yield,” says Smayling.

“So, we need to look at how they could be getting important value out of that kind of research, and look at the process to see where elements are missing.”

Smayling does point out, however, that some chipmakers are an exception to this. As a former Texas Instruments fellow, Smayling can attest that TI has historically sought to make sure that there is ongoing traffic between its design and manufacturing involving senior staff on both sides. “It’s right there in the business model,” he adds.

There are some trends that may be preventing the right type of collaboration, with cost very much at the fore, but hardly alone. And, although Smayling himself does not say so specifically, there is a scenario whereby it could be to a foundry’s advantage to carry the burden.

Cutting mask verification time by 90%

Applied Materials is already a major player in the DFM space. It recently launched Applied OPC Check, which performs automated qualifications of optical proximity correctionbased chip designs. The tool is used in conjunction with the company’s VeritySEM CD-SEM metrology system to cut mask verification time by 90% over manual methods and is specifically aimed at the development of chips from 65nm onwards.

OPC Check uses EDA OPC design data to automatically create accurate metrology recipes, then directs VeritySEM to measure thousands of sites on a wafer. This automated sequence can be completed in just a few hours, rather than days or weeks with manual methods.

The metrology data is sent back to the OPC Check and EDA systems where it can be analyzed and used for model building, mask qualification and process window characterization.

OPC Check is part of Applied’s Resolution program, which aims to cut the time and cost of resolving yield-limiting defects. More information on this aspect of its DFM strategy can be found at www.appliedmaterials.com/resolution/.

“There is the talk about bringing the manufacturing element as far up the design chain as possible. I think we want to be very careful here,” he does note.

“And the reason for that is you get such integration that either you can only use one supplier – and the way fabless companies use foundries is that most prefer to have a second source, at least – or get into a situation where someone tunes just one knob in the process and you could be looking at an entire re-spin on the design.”

A very important caveat needs to be added. Smayling does not think that DFM is doomed. Far from it.

Given the advantage of his position today at one remove from the design-manufacturer relationship, he is rather arguing for people to step back themselves and look at the bigger picture. “If you look at the design issue and the integration of an understanding of manufacturing variability, there is an appropriate and important point to introduce after the RTL/synthesis stage,” he says. “It will help ensure you do not end up with a chain that is too restrictive.”

Smayling also believes that a clear analogy already exists for the way that manufacturing data can be fed back to design in a powerful way.

“We need to do it, and what we need to do is find something like SPICE, a compact model for encapsulating complex information that works for DFM,” he says.

And once the industry does wake up to the new realities and challenges posed by DFM, Smayling says the opportunities will be amazing.

“If you listen to some of the talk, it’s all about pain,” he says. “The truth is that we’re now in a golden era for IC design. It’s now not so much about just scaling to a set of rules – they don’t apply as much anymore. Instead, engineers have to look to the architecture and the RTL and really bring out and develop their design skills to get better performance.”