True random number generators for a more secure IoT

An analysis of what it takes to build true random number generators that can provide a strong cryptographic basis for systems security, especially for IoT devices.

Most of the devices that form part of the Internet of Things (IoT) need cryptographic security features to protect their users from data losses and the attendant risks of passwords or log-in misuse. Implementing cryptographic security is complex and often counterintuitive. One of the most difficult challenges is creating a source of true random numbers, the heart of most security systems. Many popular random ‘noise’ algorithms such as those used in IoT devices today turn out to be flawed, delivering a predictable and/or discoverable output. Some flaws are never (publicly) discovered, creating a false sense of security. The devices in which flaws will be discovered are those with the most egregious errors, and those that are the most popular.

Security: a group effort

One of the most important concepts that product design and management teams need to understand to implement cryptographic security is the “chain of trust.”

The metaphor is well chosen, given that if any link in a system’s security chain of trust is compromised, the system is compromised. Cryptography is just one link, so conflating “encryption” with “security” is a potentially dangerous error that can lead to poorly implemented security architectures.

Cryptography requires secrets to form the basis of a system’s cryptographic features. If the secrets are revealed, the cryptographic security is broken and the system or its data is vulnerable. Protecting the secret(s) is vital to establishing and maintaining security.

To be effective, such secrets must be:

- Unpredictable

- Uniformly distributed (i.e., there is an equal chance for any number to appear)

- Statistically independent (i.e., each secret is unrelated to previously generated secrets or secrets held or generated in other related devices)

- Secret!

In short, randomly generated secrets must appear to be perfectly random. This is harder to achieve than many developers realize. Rolling dice at a casino table is random, and creates numbers that are unpredictable, uniformly distributed, and independent from one another. Yet randomly picking a number from 1 through 6 would make a poor basis for a security system, because there’s a 1-in-6 chance of guessing it on the first try. However, rolling 120 dice and composing their values into a string of numbers from 1 through 6 would be a reasonable basis for a random number generator in a security system, since the number of possible sequences approaches 2128, which is consistent with the security requirements of modern cryptographic systems.

Taking the guesswork out of randomness

“Hard to guess” is not a good test for randomness. To address this issue, the US National Institute of Standards and Technology (NIST) has developed the SP 800-22 statistical test suite for evaluating randomness in a data set, and SP 800-90 (in parts A, B, and C), which defines the design and testing criteria that a random-number generator (RNG) must meet to be considered random enough for cryptographic applications.

The recommended architecture for a NIST SP 800-90 compliant RNG is to seed a cryptographic-quality pseudorandom number generator (PRNG) with an unknown seed value and then use the PRNG for a period of time, or to produce a quantity of random data. The PRNG is then re-seeded and used for a while, and so on. The seed for the PRNG should be a secret, random input derived from an “entropy source.” Ideally, this should be a high-quality “true RNG” (TRNG) that derives entropy from a random process such as the current flowing in a transistor or the time between radioactive decay events, and then conditions the signal to remove bias and whiten the spectrum of the resulting sequence of outputs. Entropy sources need to be controlled and monitored for factors that may affect the quality of generated entropy such as operating temperature, aging, susceptibility to electronic noise and upset, so that the randomness of their output cannot be undermined by external actors.

NIST has also released the Federal Information Processing Standard (FIPS) 140-2, which describes how to verify, test and monitor such systems in cryptographic security modules. Together, these standards take the guesswork out of evaluating the quality of a random number generator. Internationally, other organizations have produced similar specifications and evaluation methodologies. While the details differ, they all share similar objectives and even evaluation methods to assess the quality of output from an RNG. For simplicity, we limit our discussion here to the NIST methodology.

Hardware attack vectors

There are many ways to try to hack a secured system. For example, random numbers in use as cryptographic keys have been extracted from computers by observing the radio-frequency energy the chips using them emit, by applying standard lab equipment and a lot of statistical analysis.

It is also possible to force glitches on the I/O pins of a microprocessor or SoC to cause the chip to lock up or misbehave, leaving it vulnerable to hardware analysis or malicious software. Such attacks can also corrupt data values as they are transferred, or alter privilege levels to lower hardware defences.

Encapsulated chips can be decapsulated, exposing their memory cells, gate configurations, pins, leads, and sometimes even the values of secret keys held in non-volatile memory such as eFuse arrays. ROMs can be dumped. Buses can be monitored. Some attacks monitor the power supply to derive the circuit behaviour based on the system’s varying current draw. Fault-injection attacks feed a system carefully crafted inputs to disrupt its correct operation. In timing analysis attacks, data-dependent differences in the execution time of individual operations are used to determine the values of secret data.

Many of these attacks rely on the fact that although the original random number used in a cryptographic operation may be unknown, it can be discovered indirectly by observing seemingly unrelated phenomena.

Observability vs understanding

Hackers can also manipulate systems to interfere with their function, often through low-tech means. Heating a chip with a hair dryer may cause it to behave differently, perhaps because of changing propagation delays within its circuits. This kind of attack may not reveal anything about the system’s encryption method, but if it changes the system’s behaviour, it might be used as an attack weapon.

Many such attacks rely on a technique called “Bayesian estimation of discrete entropy,” which relies on the fact that given enough samples, hackers can deduce how a system works, even if they never completely understand why. The speed of modern processors makes it easy to log hundreds of thousands, or even millions, of samples to analyse.

One way to counter this approach is to make it too costly or prolonged a process to gather enough samples to determine statistically significant differences between the operation of a circuit that has been attacked and one that has not. If it takes five years to accumulate enough samples, most hackers will move on before discovering anything useful – unless the size of the reward (for example, a universal key to pay-per-view content in a set-top box) makes it worthwhile. (It is worth noting that best practice in security system design is to ensure that cracking the keys to one device does not provide any help in cracking the keys of another.)

The paradox of randomness

Many secure systems need to generate random numbers frequently, which runs counter to the desire to reduce the number of events that might reveal measurable side-channel data. To make secure secret keys, the system should also use a different random-number seed each time it generates a key.

How do we reconcile requirements to generate random numbers frequently with the need to minimise activity to protect the system from the analysis of side-channel data such as RF emissions and power fluctuations?

One problem with doing this in digital computers is that they are designed to be deterministic, working with binary data to eliminate the uncertainties of manipulating analog signals. Generating true randomness in such circuits is extraordinarily complicated, and many approaches have exploitable flaws.

Many early RNGs relied on the time of day, mathematically combining the digits representing seconds, tenths, hundredths, and even thousands of a second, to produce an apparently random seed. However, this seed could only really be considered as a pseudorandom number, because the result is generated through a deterministic process that, if fed the exact same time of day, would return the same sequence of ‘random’ numbers. The approach fails the independence test because the seed is predictable. An attacker who can guess, or worse, set, the time-of-day clock has the ability to greatly limit the number of possible seeds used by the PRNG, and may therefore be able to exploit that weakness to break the security of the system.

Many small embedded systems that are representative of IoT devices lack even a persistent time-of-day clock that retains its value across reset or power-up cycles. It has been common to find systems like these using features like CPU cycle counters to seed their random number generators, leading to many copies of the same device all generating the exact same sequence of random numbers from the time they are reset. These systems are just waiting to be exploited.

Using technology flaws

Some RNGs rely on tiny chip-to-chip variations in the physical characteristics of semiconductor circuit components, such as the resistance of a trace or the capacitance of a part of the chip, as a source of entropy. This approach has its good and bad points. Physical differences in mass-produced parts exist, but they rely on luck, so a circuit designer might not be able to depend on the flaws being present or having enough variation over a large collection of devices to be truly unique, uncorrelated or independent of each other. Physical flaws may also fail the observability test, because they could be scanned with a microscope or measured with instruments.

On a practical level, entropy based on physical flaws is hard to duplicate, and may be hard to predict. It is a complex combination of features of the specific device, the fabrication process node, fabrication technology, and so on – so their use is not necessarily easy or reliable to move to a different manufacturing flow.

Countering the countermeasures

To thwart side-channel attacks based on observable phenomena, an ideal TRNG would not radiate any RF energy signal that correlates with internal activity, nor affect the host device’s power consumption, or expose any data on I/O pins – including internal ones. Unfortunately, all electronic devices produce observable phenomena, so the next best thing is to mask the TRNG’s behaviour.

Masking uses extra additive noise signals that are not incorporated in the TRNG’s output to shield a TRNG from side-channel attacks based on observable phenomena. This is similar to the way that mechanical devices are ‘quietened’ by masking their sound with white noise. From the outside, it should be impossible to tell what the TNRG is doing, so that it appears to behave exactly the same way regardless of key length, entropy source, or level of activity.

A TRNG should also pass the predictability test by relying on a truly random source of entropy, as defined by compliance to the NIST standards. One major part of achieving this is for the circuit or its surrounding subsystem to monitor it for correct behaviour and continually test the operation of the circuit, signalling an error if it detects a deviation from randomness.

A TRNG’s output should be insensitive to time, heat, voltage, and other input phenomena that might be controlled by an attacker. The physical processes that form the entropy input to the TRNG will often be sensitive to these parameters, so the TRNG design must control for and eliminate their effects on its output, or signal an error condition if it is unable to reliably operate under the given conditions.

The input entropy source used by a TRNG would ideally be a uniformly distributed random variable with no bias (all possible outputs would be equally likely). In practice, very few physical phenomena behave this way. Almost all entropy sources have a frequency spectrum with more energy in the lower frequency portion of their spectrum (a “low-pass” spectrum), and most exhibit some bias (some outputs are more likely than others). The raw entropy inputs must be conditioned to eliminate these behaviours. This conditioning process is called “whitening” and “unbiasing”. The whitening process produces an output sequence from the TRNG that has equal energy in all parts or its frequency spectrum, a so-called “white spectrum” or “white noise” characteristic. Unbiasing means that all output values are equally likely, when viewed over a large sample of outputs.

Synopsys DesignWare true random number generator IP

Synopsys has developed TRNGs that address these issues and are delivered in the form of synthesizable soft IP blocks:

- The DesignWare True Random Number Generator is classified as a ‘Live, Conditioned Digitized Noise Source’ by NIST. It combines a whitening and unbiasing circuit with a noise source that can be used to seed a PRNG, as well as provide a source of entropy.

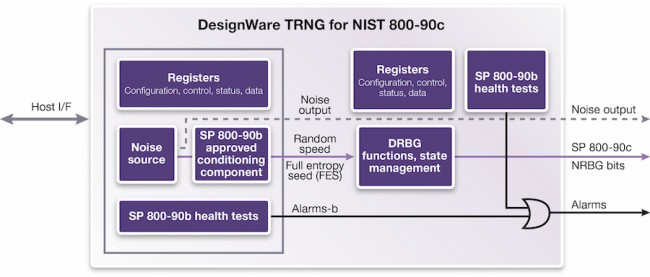

- The DesignWare True Random Number Generator for NIST SP 800-90C block is classified as a ‘Live, Enhanced Non-deterministic Random Bit Generator’ by NIST. It combines a NIST SP 800-90B-compliant conditioning circuit together with an entropy source, and a NIST SP 800-90A-compliant “DRBG_CTR” deterministic random bit generator.

Figure 1 Synopsys' DesignWare IP includes a conditioning component that helps ensure the randomness of its output (Source: Synopsys)

Closing thoughts

Security and privacy are increasingly important design considerations for everything from basic consumer devices in the Internet of Things and beyond. Both characteristics rely on encryption, whose effectiveness relies upon access to a reliable source of truly random numbers.

Securing this is harder than it looks. ICs give off ‘side-channel’ information, such as RF emissions or variations in power consumption, that give away the activity on the chip. Bayesian analysis can draw statistically significant conclusions from multiple attacks. And physical chips can be probed and even reverse engineered, if the potential rewards seem large enough.

The security strength of many systems and applications is dependent on the quality of the source of entropy, such as a TRNG that cannot be thwarted, observed, or manipulated by physical, electronic, or statistical attacks. International standards have been developed to prove the truly random nature of a TRNG in a verifiable and statistically rigorous manner – and that is what we use to qualify our TRNG soft IP blocks.

Further information

DesignWare True Random Number Generators

White paper: True Random Number Generators for Truly Secure Systems

Author

David A Jones is a senior field application security engineer for Synopsys DesignWare Security IP. Jones has more than 20 years of experience in the software and security industries and has held a number of security R&D positions at Elliptic, Cloakware/Irdeto, and Quest/Dell, developing network security, application security, hardware security, and hybrid security solutions. Jones holds a patent on creating a method and system for dynamic platform security for device operating systems. He earned a Bachelor of Science degree in Computer Information Systems from Ohio University.

Company info

Synopsys Corporate Headquarters 690 East Middlefield Road Mountain View, CA 94043 (650) 584-5000 (800) 541-7737 www.synopsys.comSign up for more

If this was useful to you, why not make sure you’re getting our regular digests of Tech Design Forum’s technical content? Register and receive our newsletter free.