Still using Moore’s Law to beat up on the automotive industry?

It seems that whenever Moore’s Law gets cited in the press, the reporter will go on to imagine how other industries might have evolved had the same if the same faster/better/cheaper schedule applied to them. In particular, the auto industry often plays the stodgy counterfactual role in these thought experiments.

Only last month, The Economist led an article on the end of Moore’s Law with a comparison against the Ferrari Daytona, the world’s fastest car in 1971, the year Intel launched the 4004 processor. After the usual sketch of exponential transistor growth (2,300 to 1.75 billion in 45 years), the reporter noted that “[i]f cars…had improved at such rates…, the fastest car would now be capable of a tenth of the speed of light”.

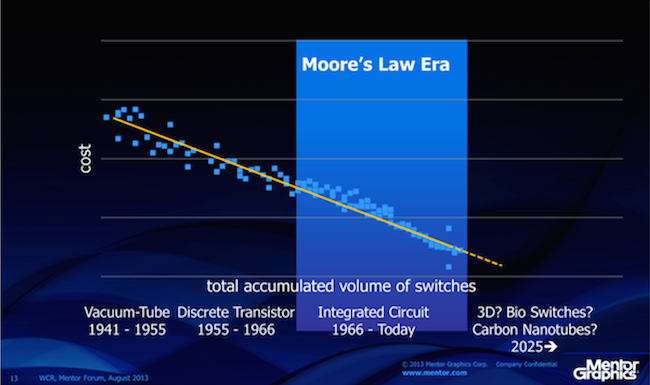

Setting aside the obvious apples-to-oranges problem with this pairing, The Economist is just the latest in a long list media outlets to warn ominously about the end of Moore’s Law. Though that’s not a conclusion that appears to keep my boss, Mentor Graphics’ CEO Wally Rhines, up at night. In an April 12 blog post on Scientific American, Wally makes his case why the declining cost per switch will continue well into the future thanks to the learning curve, the theory that underpinned Gordon Moore’s original observation and that surely will outlive it. Wally was similarly optimistic when interviewed by The New York Times last fall on the topic.

Figure 1. The learning curve suggests the basics of Moore’s Law will continue beyond the IC era (Mentor Graphics)

This is good news in a host of contexts far beyond computers and phones, and especially when it comes to the automotive industry. That business is being transformed in astonishing fashion, largely due to ever more powerful and less expensive ICT. While we still get clever comparisons to Moore’s Law suggesting cars are something of an innovation backwater, you can rapidly expose that as a laughable idea simply by casual observation of the changing mix of vehicles on the roads. Meanwhile, I’ll give you one guess as to which company was named the world’s most innovative in 2015 by Forbes.

I’m loathe to pick too much on the press, which generally does yeoman’s work and has been disrupted more than most by Moore’s Law. Still, I can’t resist some (lighthearted) tweaking.

Hey, Economist, when will we start seeing articles where something automotive-related serves as the heroic benchmark of innovation rather than the stooge? Indeed, here’s a thought: ‘Google’s self-driving cars drove more than a million miles over the course of seven years before the first algorithm-at-fault accident (and it was a very minor injury-free fender bender at that). How many articles would be published without a correction being issued if the media was forced to observe the same level of accuracy?’

Andrew Macleod is Director of Automotive Marketing for Mentor Graphics. He has more than 15 years of experience in the automotive software and semiconductor industry, with expertise in new product development and introduction, product management and global strategy, including a focus on the Chinese auto industry.

Andrew Macleod is Director of Automotive Marketing for Mentor Graphics. He has more than 15 years of experience in the automotive software and semiconductor industry, with expertise in new product development and introduction, product management and global strategy, including a focus on the Chinese auto industry.